Introduction

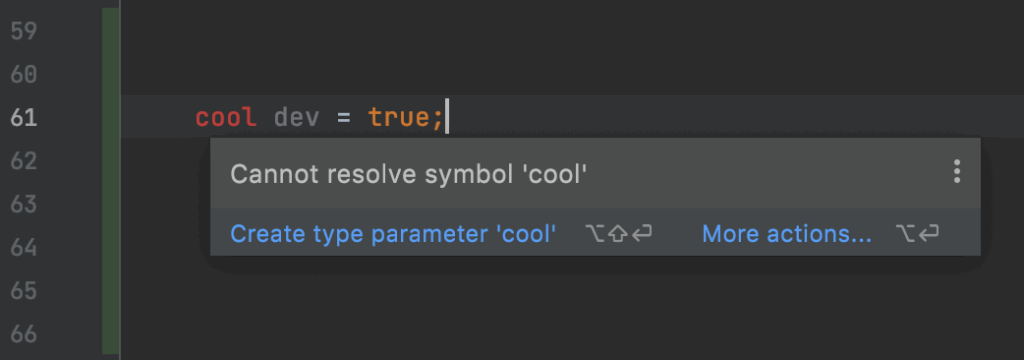

Parsing is the process of turning a linear sequence of characters into an organized, tree-shaped structure. Then, a computer program can further elaborate on such a structure to produce useful results. For example, it could tell us that “cool” isn’t a valid data type, even though we’d so much want it to be.

Jokes aside, nowadays we tend to consider parsing a well-known, tractable problem. We have valid theory modeling it, and the problems we face with it are most often non-functional. For example, performance, good error reporting, or integration with a given software environment.

We also have tools, such as ANTLR, to generate parsers from a high-level, declarative specification. These tools rely on proven technology that has been used in many successful projects. Here at Strumenta, we love ANTLR, but long before Strumenta and even ANTLR were a thing, people were already using parser generators (or “compiler-compilers”) effectively.

Probably as a consequence of our understanding of parsers, and the tools we use, we have learned to design languages in certain ways more than in others. Most modern languages are well amenable to being recognized using a generated parser. Some language designers even publish an official, formal grammar for their language.

However, things haven’t always been so easy, and as we all know, the past sometimes comes back to bite you. In this article, we’ll explore some of the difficulties in parsing legacy languages. In particular, we’ll be looking at the SAS language and its macros.

Before we begin, though, we’ll discuss what we mean by “legacy languages”.

What Makes a Language “Legacy”, Anyway?

SAS, the company, was founded in 1976, but the SAS language dates back to 1966. It started as a project of North Carolina State University. For comparison, the C language is from the early 1970s; however, it was born as an improvement of the B language, which dates back to 1969.

So, we can say that C is only a few years younger than SAS. Certainly, less than ten years younger. Now, even a few years can matter a lot in the world of software development, especially if we’re referring to pivotal years such as the late 60s and early 70s.

However, we hardly ever hear C mentioned as a legacy language, while we can say that SAS is one. Clearly, age alone is not enough to explain why we consider a language “legacy”. Nor is adoption. Of course, C has “won” thanks to Unix, and Unix has “won” also thanks to C. But we cannot compare SAS with C in their adoption, because SAS is a high-level language, which is domain-specific to a certain degree, while C is a systems programming language. In its niche, SAS has stayed relevant for decades, and indeed, the SAS company is operating successfully to this day.

It’s not even a matter of evolution. C is today almost the same language that it was at its inception. Yes, we’ve had C++, Java, C#, Rust, and countless other languages that we may call C-like or C-inspired; we’ve seen a great evolution in compilers, editors, libraries, frameworks, testing, etc. But the C language proper is still mostly the same as in the early 1970s. On the other hand, SAS has evolved to include, for example, HTTP and machine learning capabilities.

Why Is SAS a Legacy Language?

We could say that a language is legacy if it’s used primarily to maintain existing systems, rather than create new ones. That usually happens when companies and developers perceive the language as a liability, rather than an asset. Something to move away from, if only we had the necessary resources. Something we’re stuck with – until better times come.

However, that doesn’t still answer our question; it’s a piece of circular logic: companies want to move away from a language because it’s legacy, and they can’t replace their retiring developers; but that language is only legacy because people are moving away from it.

Viewing it from another angle, we could say that a language is a legacy language when it looks and feels like one. SAS editors can’t match with modern IDEs, for example. In contrast, with tools like CLion or Visual Studio, we can write C code in a modern environment. The SAS language itself, with its syntax and terminology, looks somewhat strange, far removed from the languages we’ve familiar with.

Now, the question becomes: why does SAS look aged? Why do tools for working with SAS look like old-fashioned Windows applications?

In part, it’s an effect of market share. SAS has but a tiny fraction of the popularity of C, so it cannot possibly receive the sheer amount of effort and funding that we’ve collectively dedicated to C. We’re back to circular reasoning here.

However, in part, SAS looks old, and tools to work with SAS lag behind tools for other languages, because SAS doesn’t follow what we now know to be good language design practices.

So, that will be our definition of legacy language for the rest of this article. We’ll explore some of the ways in which SAS doesn’t follow best practices and why that makes it hard to parse.

Note that legacy doesn’t mean “bad” or unfit for a given purpose. Many companies still base their core business activities on legacy technologies, that serve them well. Not everybody needs or wants a fancy IDE, or the latest bloated framework, packed with features they won’t use and extra complexity they’ll have to learn about. However, with legacy languages, the are certain risks and costs that you’ll have to face. Examples include vendor lock-in, high cost of operation, and difficulty in finding developers.

The Importance of a Language Grammar

We’ve said this in the introduction, but it’s important to reiterate: nowadays, we give it for granted that at the foundation of every language lies a formal grammar specification. Furthermore, if we appropriately write down that grammar, we can generate a parser from it using state-of-the-art tools.

Sure, many real-world compilers and editors use manually written parsers, for various reasons: performance, fine-tuned error reporting, etc. Sure, sometimes a grammar is only provided for documentation purposes, and it’s not available in a single machine-readable form.

But it remains true that, conceptually, we can write such a grammar for any modern language – where, by “modern”, we really mean “not legacy”. This is very important because it means that we can predict, with better accuracy, the effort that we’ll need to implement a new tool or feature that needs to have “language intelligence”. For example, code completion in an editor, or support for a new language construct in a transpiler.

Also, grammars for modern languages usually follow patterns, many of which we now consider to be “conventional wisdom”, that make it possible to have better tool support. For example, in SQL, “select” comes before “from”; in Microsoft’s LINQ, instead, “from” comes first, and that allows the IDE to provide better code completion for the columns in the select clause. In fact, knowing which tables we listed in the from clause, and knowing which columns are in each table, the IDE can limit the suggested columns and only show the relevant ones.

In contrast, many legacy languages weren’t designed with such considerations in mind. So, even with modern technology, we simply cannot obtain the same level of intelligence in our tools. And, as we’ve said before, we human developers of today also will also probably have trouble “parsing” such languages when reading them. Because we’ve effectively trained ourselves to rely on such conventional wisdom and we find it awkward when a language doesn’t follow our expectations.

The Case of SAS Macros

Macros in SAS are an example of what we’ve just described. Let’s see how they work and how we may want to parse them.

Macros in Programming Languages

A macro is, broadly speaking, a source-to-source transformation. The term comes from the ancient Greek prefix for “large”: originally, it referred to the aggregation of several simple instructions into a bigger “macro instruction”. For example, this applies to “keyboard macros” – the recording of a sequence of keystrokes into a repeatable command – and to assembly language macros that allow programmers to create new, high-level instructions from a sequence of assembly statements. Effectively, in assembly code, macros are the only form of abstraction.

Later, various forms of macros have been introduced in many higher-level programming languages. It’s useful to distinguish the “macro language” – the language that we use to define the macros – from the “target language” that is the output of a macro.

Most languages in the Lisp family support macros that are basically functions executed at compile time; in that case, the macro language and the target language are the same. The arguments that macro functions receive are a representation of code as objects, i.e., an abstract syntax tree. Variations of this approach resurface in other programming languages that are more recent, such as Groovy with its AST transformations, and Scala.

Other languages, most notably C, have adopted the practice of passing source code through a preprocessor before compiling it. The preprocessor has the task of expanding macro instructions. In that case:

- The macro language is limited and different from the target language;

- Macros operate on raw text or lexical tokens that do not necessarily represent valid pieces of code.

Essentially, C-style macro preprocessors are text templating languages, not so different from Freemarker, Jinja, and the like, but with logic that is specific to the generation of source code (such as switches for conditional compilation).

Macros in SAS

In the SAS language, macros are similar to C preprocessor macros: they are text-to-text transformations that are expanded before the program is executed. And, like in C, macros can take parameters and expand into other macros. So, they support some form of abstraction, like functions in a procedural language.

However, there are significant differences with C macros. Let’s review them.

- The SAS macro language is richer. Besides text substitution, C preprocessor macros support a limited form of control flow that can only test whether a macro variable is set or not. In contrast, SAS macros can evaluate expressions, have conditional statements (the %IF-%THEN/%ELSE statement) and loops. The SAS macro language is, effectively, a full programming language, including I/O facilities.

- In SAS, there’s no separate compilation phase. C is a compiled language, and macro preprocessing is only the first step in the compilation pipeline. SAS is an interpreted language, without a clear separation between different phases of execution.

In particular, understanding point 2. and how SAS expands macros is important. The SAS engine reads in the source code token by token. When it recognizes that it has read an entire statement, it executes it by interpretation.

In this process, when the interpreter finds a token that starts a macro instruction, it switches to “macro mode”: it becomes an interpreter for the macro language, rather than the regular SAS language. In this mode, it will continue to read token by token. When it finishes reading the macro statement, the interpreter will execute it: i.e., it will perform macro expansion. It will insert the body of the macro – as text – into the interpreter’s input stream, and it will restart reading SAS code token by token.

No Clear Separation Between Phases

Worse still, the interpretation of regular code can influence the macro environment. Take the following example:

proc sql; select age into :age from users where id = 1; %if &age > 18 %then … %else … %end;

Here, the SAS engine executes a SQL query and stores the contents of the age column into the age macro variable. In the next instruction, the %if macro will expand into different variants of the code, depending on the age variable’s value.

So, we can see how the macro-expansion time and the runtime are intertwined. There is no separation between the different phases of execution. That simply wouldn’t be possible with a preprocessor that works on text files. How would such a preprocessor resolve the age variable just by looking at the program text?

Indeed, those two properties combined – having a rich macro language, which can access the interpreter’s environment – make SAS basically unparsable in the general case. By that, we don’t mean that it’s impossible to parse; SAS interpreters exist, after all. However, we cannot write a formal grammar that can correctly recognize every possible SAS program with macros.

In fact, a macro, rather than just printing the value like in the simple case we’ve just seen, could expand into arbitrary code fragments – and it may do so conditionally, depending on the results of a SQL query! On the other hand, we could write down a grammar for the SAS language without macros, and probably one exists somewhere already.

In other words, a complete parser of SAS must also implement a complete interpreter of SAS, because a macro could generate code according to information available only during runtime, such as SQL query results, files on the file system, the current date, and so on. And, to compute such information, it may invoke SAS built-in functions.

Of course, SAS developers don’t go unnecessarily wild with their macros most of the time; while it’s possible to write a macro that expands to completely different code depending on the current date, there is typically no reason to do so. Thus, with a pinch of cleverness, we can still parse most relevant SAS programs.

Parsing Macros in SAS

To Parse, or Not To Parse? This Is Not a Binary Question

Many people have a schematic idea of parsing: either it works, or it doesn’t. That is to say, the output of a parser can only be one of these two:

- an ideal tree representing every structure and information that is relevant in the source code;

- an error.

However, we know that reality is more nuanced. Different parsers for the same language can have differing levels of quality. That is, whenever a parser successfully completes, the resulting tree will represent the information in the source file with varying degrees of accuracy and ease of use. After all, we can parse every source file in any language, past, present, and future, with the following grammar:

sourceFile: .* EOF;

That is, any sequence of tokens, terminated by the end of the file. But, of course, that will give us zero information about the structure of the code. This is an extreme, degenerate case. But we can apply the same principle to only parts of a source file. Indeed, while we develop a parser, we don’t go from not parsing anything to parsing everything in a single step. Rather, over time we build a series of parsers that recognize a progressively larger subset of the language.

And the grammar is only part of the story; some information we can only reconstruct in a second or third pass, when we build an abstract syntax tree or when we post-process it to compute additional properties that we’re interested in (for example, the correctness of expression types). Often, trying too hard to extract every piece of information and validate every constraint using only the grammar doesn’t lead to the best solution.

Not only a parser can have different levels of quality, but the requirements that we desire from a parser vary according to the application. A parser to implement code suggestions in an editor must give an answer in the shortest time possible and must be able to recover from malformed input, even if that results in diminished precision. A parser which is the first stage of a compiler, on the other hand, must be precise; it may reject malformed input without trying too hard to recover from errors, and it may optimize its performance on large amounts of files rather than quick response times on a single file.

So, to summarize, we shouldn’t be asking: “Can we parse SAS code with macros, yes or no?”. Rather, we should be asking: “How well can we parse SAS code with macros? Which tradeoffs are we going to prioritize?”

Setting Our Goals

Before going forward, let’s write down what we want to accomplish. “Parsing macros” is really three different tasks:

- To parse regular code that is interrupted by macros;

- To parse the macro language;

- To parse the code that a macro will expand into.

The above may be unclear, so let’s look at each point with an example.

Parsing Code With Macro Expansions

Our first goal is to extract as much information as possible from regular SAS code which is interrupted by macros. Let’s look at the following example:

data Prod.Something; set %do Gen_Num = 0 %to 10; foo.attr(GenNum = &Gen_Num drop=ACCOUNT) %end;; end;

Here, we have a DATA step that contains a SET statement. Rather than spelling out the 10 components of the SET statement, the developer used a macro to generate them.

Now, in our grammar, the SET statement may be defined as:

dataSetStatement: SET dataSetSingle+ dataSetStatementOption*; dataSetSingle: source=dataset (EQUAL dest=dataset)?; dataSetStatementOption: KEY EQUAL identifier (SLASH UNIQUE)? | END EQUAL identifier;

This is only a partial definition, but let’s not concentrate on the details. The issue is that our grammar will fail to recognize the code we’ve listed earlier as a SET statement, because it’s interrupted by macro code.

We want at least to recognize that we have a SET statement that ends in a semicolon before the “end;” token, even though we won’t be able to obtain the actual code of the SET statement from the parser.

To tackle this problem, we can adopt different strategies. Let’s keep in mind that macro code can appear anywhere and, in theory, it could also break tokens apart, so, in the very general case, it will be very hard to parse all instances. However, most SAS code is not so extreme in its use of macros.

A Pragmatic Approach: Special-Casing

One approach, which we’ve used, is to be pragmatic and identify, in a set of sample SAS code files, the cases where macros are used. Typically, a codebase or a set of codebases from the same organization will exhibit some coding patterns. Such patterns define an informal subset of the language used in that organization, and we may choose to tailor our parser to that subset.

For example, with one customer, we encountered several of the SET statements similar to the one we’ve shown here, but no RENAME statement interrupted by macros. Then, we’ve introduced a special provision for macros that appear in the middle of a SET statement:

dataSetSingle: macroStatement | source=dataset (EQUAL dest=dataset)?;

This of course is not a general strategy. Not only it will parse just a subset of SAS, but if the exceptions are many, the grammar will accumulate noise that will make it hard to read and reason about. Imagine if at any point in our rules we had to add an alternative for macroStatement! Fortunately, in practice that’s not necessary.

A More Thorough Approach 1: Filtering in the Lexer

Another, more complex approach could be to instruct the lexer to place macro code on a separate channel. Then, the SAS parser won’t ever see it; we’ll still be able to process it with a separate parser, or even a different entry point rule in the same parser.

This is the approach that is commonly used for whitespace and other tokens (such as comments) that can appear anywhere in the code. We don’t want to pollute the parser with a lot of (WHITESPACE | COMMENT)* noise, so we put those tokens on a separate channel. They will still end up in the token stream that is the input of the parser, but the parser will ignore them.

Unfortunately, doing that for macros is not as simple, because macro code has structure. Recognizing where a macro starts is easy since all macro tokens in SAS begin with a percent (%) character. However, the lexer would also need to understand where a macro statement ends, and that’s more problematic because there’s not a single stop token. Some macro statements end with a semicolon, others are terminated by “%end;”. Macro definitions are terminated by “%mend;”, instead.

Also, we can nest macro statements in blocks, so the lexer would need to become rather advanced. In practice, it would become a parser capable of recognizing at least the general block structure of macro code. This is not what a lexical analyzer is fit for, although it’s technically possible. After all, in ANTLR, we can even use a manually written lexer that implements whatever logic is necessary.

Still, that wouldn’t completely solve the problem in the parser either, because omitting macros could result in malformed expressions. Recall the previous example:

set %do Gen_Num = 0 %to 10; foo.attr(GenNum = &Gen_Num drop=ACCOUNT) %end;;

If the parser doesn’t see the macro, it will be left with:

set;

In this specific case, we could relax the grammar so that it accepts an empty set expression, and possibly check that there is at least one thing to set in a later phase. However, we cannot possibly anticipate all the places where a macro would go. We’re back to example-driven special cases, as in our first approach.

Still, this solution would have the benefit of making the parser handle unexpected macros better. That’s because, when we omit the tokens that make up macro code, we are left with pure SAS code which most of the time will be correct or mostly correct. Though it’s still possible to have a macro expand into code that closes a statement and begins another, that’s a rare corner case. For example:

set %do; a = b; rename %end; a = c; # This will expand into: set a = b; rename a = c; # However, omitting the macro, the parser would see: set a = c;

We’ve never actually tried this approach as it seems difficult to implement well. However, it would have the benefit of keeping the parser simple, at the cost of overly complicating the lexer.

A More Thorough Approach 2: Using Error Recovery

A variation on the last approach could be to use the error recovery strategy of our ANTLR parser to process unexpected macros. As in the previous solution, we would keep the parser free of special cases, at the cost of complicating some other part of the pipeline. In this case, that would be the error handler.

In ANTLR parser, a dedicated object called the error handler defines the strategy to use in case of a parse error. The default strategy is to try to recover from the error by inserting or removing a single token, which works in many real-world situations. Another built-in strategy that we can plug in simply fails with an error without attempting any recovery.

However, we’re not limited to predefined error handlers. We can add a new strategy such that, when the parser encounters an unexpected token, it will try to parse the code as a macro statement. If successful, it will insert the parse tree node representing the macro statement as a child into the main parse tree, and resume normal operations.

To do the above, we can leverage the fact that the parser’s input stream, a TokenStream, is a stateful object that keeps track of the current token. We can invoke operations to advance the stream to a subsequent token, or reset it to an earlier position. So, we may sketch an error strategy like the one we’ve described as follows:

class MacroErrorStrategy : DefaultErrorStrategy() {

override fun singleTokenDeletion(recognizer: Parser): Token? {

// recognizer.context is the parse tree node being built

if (recognizer.context == null) {

return super.singleTokenDeletion(recognizer)

}

val index = recognizer.inputStream.index()

val token = recognizer.currentToken

val macroParser = SASParser(recognizer.inputStream)

val macroStatement = macroParser.macroStatement()

return if (macroStatement != null && macroParser.numberOfSyntaxErrors == 0) {

recognizer.context.children.add(macroStatement)

// -1 because the caller will call consume()

recognizer.inputStream.seek(recognizer.inputStream.index() - 1)

reportMatch(recognizer)

recognizer.currentToken // any non-null token is OK

} else {

recognizer.inputStream.seek(index)

super.singleTokenDeletion(recognizer)

}

}

}

This is not a complete implementation, because in our SAS grammar we have more entry points into the macro language than just macroStatement. But you get the idea.

Like the previous solution, this will keep the parser free of special cases to handle macros in all sorts of places. And, just like the previous solution, it still has the problem that eliding macros may result in incomplete or mismatching code. However, in an error listener we have more leeway for adding context-dependent strategies, such as adding extra tokens to the stream to keep the parser happy. Again, this is not a general solution, some case-by-case tweaks will be necessary.

Also, the choice of inserting the parsed macro code as a node in the resulting parse tree may have consequences on the code consuming the tree, which may have to deal with unexpected children. ANTLR generates parse tree nodes that access children by type and not by index, so it’s unlikely that adding extra children would break the parse tree. However, some consuming code that traverses all the children for some reason may still need adjustments. We could also devise a variation where macro code is not part of the main tree and is instead returned on a different channel.

Parsing the Macro Language

Let’s have a look at our second goal: actually parsing the SAS macro language.

The macro language itself is nothing special, it has functions, variables and familiar control structures like the if statement and a do statement that can serve either as a loop or as a block. Variables start with the ampersand character ‘&’ so they’re easy to identify in the lexer. Keywords start with a percent character ‘%’.

However, recall that the SAS macro language is interpreted and operates on the stream consumed by the SAS interpreter. Macro statements are better understood as instructions to modify a stream of code while it’s being read. Take the put statement as an example:

%put START TIME: %sysfunc(datetime(),datetime14.);

In a language such as Python, this could be written roughly like the following:

print(f"START TIME: {format(datetime(), 'datetime14'}")

Notice how Python uses strings, while apparently there’s no string syntax in the SAS macro code. This is because, for the purpose of the SAS macro expander, any token which is not part of the macro language is text. In the example above, “START TIME:” is parsed as a string.

This is not specific to the put statement. The whole macro language works like that. Let’s see an example that we’ve already encountered:

set %do Gen_Num = 0 %to 10; foo.attr(GenNum = &Gen_Num drop=ACCOUNT) %end;;

Here, “foo.attr(GenNum = ” is a literal string that the macro will expand into. After it, the macro will output the value of the Gen_Num variable followed by another literal string, “ drop=ACCOUNT)”.

So, our parser will have to recognize every non-macro token as text while parsing macro code. For example, in our commercial SAS parser we have the following rules (simplified):

macroBody: (macroLabel | macroStatement | macroDefinition | macroTextContent)+; macroTextContent: ~(MACRO_DEF_END | MACRO_END);

macroTextContent is any token except two tokens that we know to be macro terminators. We have similar rules for put and other statements. Fortunately, ANTLR supports this negative match (everything except…) in the lexer and in the parser.

Effectively, the SAS macro language is an island language where the SAS language is the “sea”. The challenge here is that we want to parse as much as possible both languages in the same file with the same parser.

That’s why we haven’t used lexical modes for macros. We want tokens inside macro bodies to be recognized as SAS tokens to get a chance at parsing them. We’ll elaborate more in the next section.

However, there could be cases where the text inside a macro cannot be lexed with a purely SAS lexer. That’s why our lexer includes the following catch-all rule at the end:

MACRO_TEXT: .;

Any character that doesn’t match any other token is a piece of MACRO_TEXT. Then, the parser will only admit MACRO_TEXT tokens inside macros, and report an error if it finds one of them elsewhere.

Parsing Macro Expansions

So far, we’re able to parse some SAS code with macros, and the macro language itself. However, we’re still treating the non-macro content of a macro statement as a sequence of tokens with no structure, as we’ve seen earlier:

macroBody: (macroLabel | macroStatement | macroDefinition | macroTextContent)+;

That is, we can recognize the structure of a macro statement, but we get no information about the code that it may expand into. However, chances are that we can reconstruct at least some of that information.

Take the case we’ve seen earlier:

%do Gen_Num = 0 %to 10; foo.attr(GenNum = &Gen_Num drop=ACCOUNT) %end;

Here, foo.attr etc. is just a series of tokens, e.g., IDENTIFIER, DOT, IDENTIFIER, LPAREN and so on. However, we may be able to reconstruct that code as “a part of a SET statement”. Let’s see how we may do that.

One naive way is to explicitly augment the macroBody rule with the kinds of statements that we want to be able to recognize, e.g.:

macroBody: (macroLabel | macroStatement | macroDefinition | sqlStatement | macroTextContent)+;

By adding sqlStatement, we can now recognize SQL code inside macros. Similarly, we could add all the statements that we may be interested in.

However, the code in macro bodies will often be incomplete or malformed. ANTLR is capable of recovering from certain classes of errors; still, we’ve seen that, in practice, the more types of statements we try to parse inside macros, the more ANTLR is likely to get lost when looking for the boundaries of macro code.

In practice, this has the following consequences:

- Error reporting is imprecise. Errors will appear many lines below or above the actual problem.

- Performance degrades significantly. While ANTLR employs prediction algorithms that avoid the need for backtracking, it’s still costly if, to disambiguate between different rules, ANTLR has to look way ahead in the stream.

To solve both these problems, we’ve successfully adopted a two-staged approach. As in all our parsers, ANTLR building a parse tree is only the first phase. Then, we construct an abstract syntax tree with support from our open-source library Kolasu. So, the consumers of the parser will receive an object model which is free from syntactic artifacts and idiosyncrasies and that may also offer additional features (for example, symbol resolution).

In our SAS parser, when we build the AST, we also do a best-effort attempt at parsing the bodies of macros. At that point, the contents of such bodies are flat lists of tokens intermixed with macro statements. So, we run a second pass of the parser to try to turn those lists of tokens into meaningful structure. We don’t have to rerun the lexer as we already have the tokens; that’s why we haven’t used a dedicated lexer mode for macros.

Since our parser is in Kotlin, we make use of Kotlin’s lazy property delegate to only run this second pass of our parser when the consuming code actually looks into the body of a macro. That way, we limit the performance impact, which would otherwise be significant.

Conclusions

At the end of our journey, we can confirm that parsing a legacy language is challenging and subject to a margin of error, because legacy languages don’t follow “good practices” that make exhaustive, efficient parsing possible.

Still, we’ve found our approach to be effective at extracting a great amount of information from SAS source code with macros, while keeping adequate error handling and acceptable performance. Only, as we’ve said from the beginning, this is not a perfect solution, guaranteed to parse 100% of possible SAS code. However, if the objective is to extract information from SAS source code to perform some analysis, or to transpile a single SAS codebase (which will use only a subset of valid SAS, that we can tune our parser to), this approach fits the bill.

This also shows, as we’ve written in several other articles, that building a good parser requires more than just finding an open-source ANTLR grammar. There will be choices to make, and ad-hoc solutions to find, that depend on the purpose of the parser and possibly on the actual codebase on which we’ll use it. That’s why you need the expertise of companies such as Strumenta.

If you’re interested in our commercial SAS parser package, you can see it in action on our Parser Bench.