In this article, we are going to understand how to modernize legacy code on AS/400. This family of computers is also known by its later names: eServer iSeries, System i, and IBM Power Systems.

Discussing with clients, we found they were missing an accessible guide to help people take on this process. So, we want to fill this gap by helping understand and drive this process.

Legacy modernization is an increasingly important part of software development. At first glance, you might think that the reason is that there is a lot of old code out there, and new technologies work better. That is also true, but the main reason is deeper: unless you are a technology company, the value of software is in implementing a process enabling a workflow.

You use software to check the application of some rule, to help your employees do something easier or in the right way, to add value to something you sell. However, the way we work has changed greatly in the last few years. Obviously, this has led to large changes in software, too.

We Build Software Differently Nowadays

The main issue for legacy platforms is that the way we build software has also changed greatly. This leaves such platforms at a disadvantage since they were not designed to work like that.

The AS/400 platform is tightly integrated; this has some advantages, particularly when companies create all of their own software. For example, it simplifies the development and management of the platform. It has a certain homogeneity, simplicity, relatively secure special terminals. The problem is that it also has business, operational, and technical disadvantages that have made the approach obsolete.

If you compare how you work today with how you worked twenty years ago, you will notice that you work with many more suppliers and clients. The reasons are well-known, and it is not necessary to dwell too much on them: increasing specialization of companies and the need to work with companies in different environments.

Why Legacy Modernization for AS/400 Is Important but Hard

The fundamental issue of a changed way to work hit particularly hard platforms like IBM AS/400, for a few reasons:

- They are midrange computers, a class of computer that has ceased to exist

- They are often used by small and medium companies, that typically have monolithic systems used for every need. That is because more flexible arrangements require more manpower to handle. So they had few chances of handling big transformations

- It is a tightly integrated platform

- They are very popular, so companies hope they can get away with using them until the problems become too hard to handle

Let’s see these reasons in more detail.

What Were Midrange Computers?

Midrange computers were a class of computers between mainframe computers and microcomputers. Mainframe computers were, and still are, a class of computers designed for the needs of large organizations: they have a lot of computing power, they are very reliable, and can still run old software with no changes.

Midrange computers compromised on all of these aspects, but they were generally cheaper than mainframe computers and more flexible. So, they were a great match for small businesses. Microcomputers were what we now just call computers. Microcomputers squeezed out midrange computers by becoming much more powerful and a bit more reliable. The original IBM AS/400 family itself of midrange computers ended in 2013, although IBM still produces somewhat compatible lines.

An Integrated Platform Is Great Until It Is Not

An additional problem is that businesses that adopt these systems often are inexperienced in handling big changes. Unless they are manufacturing companies, their computer systems are the only big hardware they have. So their leadership has just no experience on how to handle such big changes even at the basic organizational level (i.e., how do we handle the disruption to our daily work), without even talking about the technical challenge.

A tight integration is a feature of the platform, as we mentioned before. This was a meaningful advantage in the past, but this is now less favored as an approach.

It leaves companies locked in a platform, which makes it hard to change and thus allows the suppliers to raise prices. It is harder to adopt third-party services that could be better in terms of results or costs. Not only that, but it usually results in complicated and harder to maintain software, since a change in one aspect cascades to the whole software.

A Great Success Story

Finally, one aspect that is both good and bad is that the IBM AS/400 family in particular, was very successful. IBM literally had tens of billions of dollars in sales during the years, and the system was well-designed. All of this means that even today, a sizable number of organizations still use it.

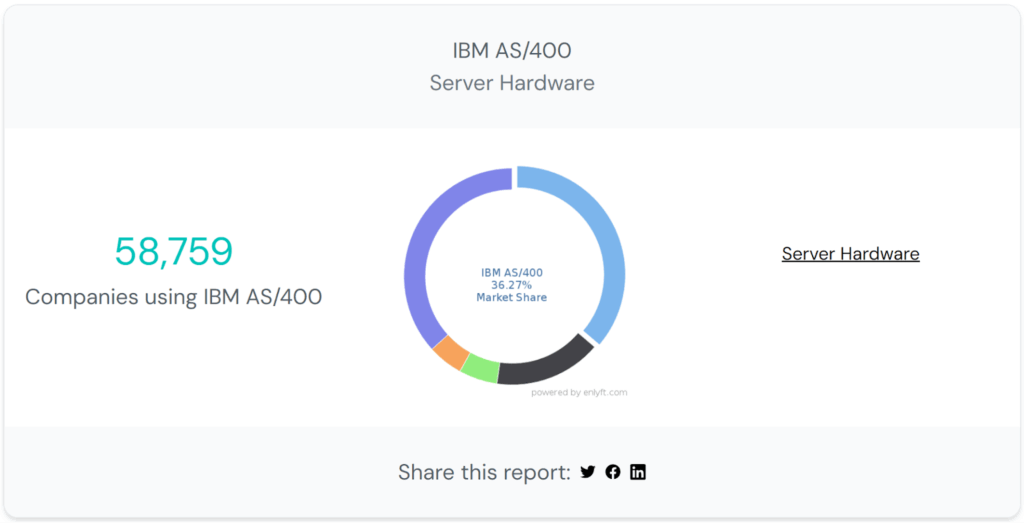

According to Enlyft, 36% of companies that have publicly available server hardware still use this platform, at least in some part of their environment. This is good because this large pool of companies and people familiar with the platform allows companies to keep using it for a while. That is even though the original line has been discontinued for ten years.

It is bad because it is getting harder and harder every year, so companies can keep using it until it is too late or costly to replace them. It is also bad because it can stop entire industries from moving on. For example, if you are a service firm and your clients keep using it, it is hard for you to change. That is until your client base shrinks to zero or it becomes uneconomical to serve that portion of clients.

Hard, Risky, Worth it

The end result is that migrating from AS/400 is hard, risky, and with uncertain results:

- Business fears. The uncertainty that accompanies the whole endeavor means that the business case is not always clear, all choices look dangerous and it is not exactly clear what you will gain

- No expertise in migration. Companies have experience running code on AS/400 but not in handling migrations

- Unknown codebase. The codebase is large and old, so companies often lack the knowledge of applications they have

- Risky migration. Migrating a large codebase is fraught with technical risks, it takes (a lot but unknown) time which means (high but unknown) budget which can lead to both technical and/or business failure. All these factors compound each other. So, migration might fail because of a lack of available expertise or money

This article will help you address all of these risks, to help you start your migration journey. We will reduce risks by identifying major technical risks, providing information to explain business advantages, and providing an overview of how to organize the migration to improve understanding of the process.

Symptoms of Legacy Code

Okay, we now all agree that still using the AS/400 is bad in theory, but how does it manifest itself?

There are technical issues that are obvious even to non-technical people, like 5250-based green screens interfaces. Basically, there is no graphical interface, but the default choice is to use console-based interfaces, which end-users abandoned almost 30 years ago. Obviously, end-user software, or mobile software, cannot have green screens UIs, so if you need to develop such software, you cannot maintain a unique codebase. You would need to build separate UIs, as separate applications, that somehow interoperate with the codebase running on the AS/400.

However, in this section, we want to go beyond these technical issues and present the business consequences of these problems.

There are a few symptoms of legacy code that directly impact your work:

- High fixed costs for hardware and licenses

- The platform is obsolete

- Hiring is difficult

- Prevent operational improvements

- Inability to handle new technical needs

- Languages are not productive

- Cannot easily work with other platforms

Let’s see them one by one.

High Fixed Costs for Hardware and Licenses

Midrange computers were originally a good deal, that is why companies bought them in droves. Specifically, they cost less to operate and maintain because IBM did not charge in the same way as for mainframe computers. For example, for mainframe computers you may have needed to pay based on the number of transactions.

The issue is that now there are better deals out there. Midrange computers still do not cost that much to operate, but they have high fixed costs and the typical software lifecycle of around 3-4 years periodically requires high costs to update the software stack. In addition to that, all the necessary support services, and licenses for enterprise software add up and they usually compare unfavorably to similar offerings on standard hardware.

While the exact numbers of course will vary, the typical ROI will be about 4-5 years for a migration project.

The Platform Is Obsolete

The original family of AS/400 is no more, in fact, the whole class of midrange computers does not exist anymore. Now, it is true that IBM still produces new systems that are largely compatible with old ones, but the focus is on maintaining existing codebases rather than adding new features. This also means that third-party software also follows this pattern and new productivity tools and software are not readily available on an AS/400 platform.

For example, new software tools for developers, like IDEs and common libraries are not available on the platform, which makes existing developers less productive than on a modern platform.

We would like to underline that this statement is not based on technical merits. In fact, one could argue that the platform lasted so long exactly because of its great technical merits. The problem is companies just do not work that way anymore. So, most of the energies of IBM and third-party suppliers are on maintenance. Even the most fervent supporters of the platform would not expect any innovation coming out from this platform.

There is no doubt that it is possible to create good software, according to today’s standard, on the AS/400. However, the platform does not help, it does not force you to do so. That is because most users of the platform do not particularly care about the issue.

Hiring Is Difficult

Most of the code on AS/400 is written in RPG, COBOL, or similar old-school languages. These are languages that few young developers know. Colleges and universities rarely teach them and there are also few professional training programs available.

They are not interesting for developers. The end result is that is hard to find new developers and you will have to pay them more. Even if you opt for hiring any good developer and just train them, it costs money and time to make them productive, and not many people are willing to focus their career on a dead end.

Prevent Operational Improvements

Midrange computers were designed for a world in which each company developed and ran its own software stack. This also meant having a sizable budget for the IT department to maintain such systems. There was a time when this was the only choice and therefore also the best choice. Now, this might still be the best approach for you right now, if you are using AS/400 systems.

However, it is more likely that having more flexibility would be better nowadays: there are other systems that are cheaper to operate or make it easier to manage cash flow because they do not have upfront costs. You simply do not have this choice, if you keep using AS/400.

Inability to Handle New Technical Needs

The AS/400 was great in its heyday, allowing it to handle most business needs and requirements. Now, this is not true anymore. The reality is many traditional functions of an enterprise, like log collection, data storage, security, etc. have become specialized services. They are best handled by dedicated companies. Few companies can effectively manage the great volume of data, integrate such functions with so many third-party services, or provide top-level quality of services for these functions.

That is what led to the rise of companies like Datadog, which can ingest log data from many services. Most companies would be unable to handle the scale and complexity of ingesting so much data and integrating so many different services.

This new world also requires much better security, it is not just protection from external threats that your company needs. It also needs protection from internal software and services based on third-party services that could be breached. Your company needs to handle disparate work environments, like work-from-home employees or overseas development centers. An old system is not ready for that. This alone can be an existential risk for a small company if you consider that 60% of small businesses fail after a cyberattack.

Cannot Easily Work With Other Platforms

Old programming languages cannot easily work with other platforms. A common reality for many companies is that they need to create and support software on different platforms. You need to have a web service in addition to a mobile application and a desktop one.

It is hard to have a unique, common codebase for all your needs on an AS/400 system.

Legacy Languages Are Not Productive

Finally, legacy languages like RPG and COBOL are not as productive as modern programming languages. They were designed to work within the constraints of the time, but these limitations are a problem right now.

To be clear: you can move on from AS/400 while still using legacy languages if you so wish. However, another potential benefit of abandoning a legacy system is that you can also abandon a legacy language.

The reality is contemporary languages are designed exactly to solve the issues the industry found when using old languages at scale. While adopting a new language will not magically solve all the existing problems of your codebase, it will allow you to implement best practices and thus reduce uncertainty in the development of new features. Now programmers are scared and hampered when working with your existing codebase, with a new language they can do more.

Some common issues with legacy languages:

- These languages look very different from contemporary languages. This makes legacy languages difficult to learn for young developers who are familiar with languages such as JavaScript, Python, Java, or C#.

- They suffer from a lack of good development tools. And such tools shorten development time.

- They do not have the vast freely available libraries of languages like Java, C# or JavaScript.

- They are less safe, lacking memory handling and other automatic helpful checks of new languages.

You can see more benefits of improving the quality of your codebase, by reading this article: 5 arguments to make managers care about technical debt.

Good Things About Old Languages

It is important to be clear that legacy languages like RPG are not unmitigated disasters. They did and still have some positive features. In the past, programming was not necessarily a separate occupation, it could be part of a larger job description. Essentially, it was a tool to automate some tasks.

A consequence of this fact is that these languages are easier to use and understand for non-programmers compared to modern programming languages. Which have been designed with full-time programmers in mind. This is a point that some of our clients have made. In industries where precision, reliability, and specialized expertise are a necessity, like banking or accounting, these languages can be used by multiple kinds of users in different ways. Some users can write code, some users can just read it, and some talk with clients who need to use the language on their platform. The reason is simple: it is safer to teach some programming to an accountant than teaching accounting to a programmer.

This approach will not work with new languages that are more productive, exactly because they are designed for professional programmers who can use specialized tools.

How to Bring Non-Developers Onboard

There are several solutions to this issue:

- you can still keep using legacy languages for some domain-related tasks, that are crucial for your business

- you can use other simple languages designed and usable for the same audience of non-expert users (e.g., VBA or similar Visual-Basic like languages)

- you can adopt a proper Domain Specific Language

Let’s see each of these options a bit more in-depth.

Keep Legacy Language Just for Them

The first option works best if your company has a well-defined group of non-technical users who use programming as part of their job description. For example, imagine you are an insurance company and you have a few analysts that write the rules for determining the profitability of a potential insurance plan using RPG. The current legacy language works fine for them and you do not want to retrain them. However, you still need to change both platform and legacy language for the rest of the company, because the current situation does not work for anybody else.

By picking this option, you can migrate most of the code to a modern programming language, but keep using your current legacy language for the core part of determining the profitability of insurance plans and write some glue code to make this combination of languages work.

Find Another Non-Developer-Friendly Language

The second option is great if the community of non-technical users that are using the legacy language has an alternative simple language.

A typical example is accountants and finance professionals who use VBA (Visual Basic Applications) because it is a language that works inside Excel. For these users, Excel is a fundamental tool and when included features are not enough to do the job, they can use it to make advanced calculations.

You could use the language for creating all kinds of programs, but just like legacy languages, it lacks the features and tool support to make it a good general language for professional programmers. However, it is simple and powerful enough for their specific needs of non-professionals. So, you can migrate most of the code to a modern programming language but also allow these domain experts to use VBA to do their part of the job.

Adopt a DSL

The third option is preferable when you have a well-defined group of non-technical users that use programming as part of their job description but the old legacy language also did not work that well. So, the legacy language did not work that well for anybody. In such cases, you can migrate most of the code to a modern programming language. The problem is that the new language would also not work well for non-technical people, so you build your own Domain Specific Language that is tailored to their job.

You can choose whatever option best suits your needs. On the technical side, they all require a transpiler to migrate the old legacy language to the new one. They also require software that acts as an interpreter to integrate either the old legacy language, the simple new language, or the DSL to make it work with the new general programming language you have chosen. The first difference is that there can be readily available interpreters for legacy languages like RPG, or possibly the VBA-like language that the community you are interested in uses. You will have to pay somebody to create the interpreter for your own DSL.

The other difference between the options is that they require more or less investment for training the users and for potential productivity enhancement. A DSL can dramatically increase your productivity but only in some cases.

Why Legacy Code Matters

We know now why legacy code on the AS/400 platform is a problem, but once you agree with that conclusion, what to do? Why cannot you just throw the code away and start from scratch? The reason is that code is still valuable. That is the problem with legacy code: it is code that is valuable, but that value is hard to use fully because of technological issues. This could be because the code uses a language that nobody uses anymore or because it runs on obsolete hardware.

In the case of legacy code on the AS/400 platform, it is both: most likely it is written in RPG or COBOL and the platform is not developed anymore.

There are certain pieces of code that you want to abandon: code that deals with technical details of the platform or application, like loading data into a database or logging system events. In broad terms, we can call this category applications that do not provide business value. It is bureaucracy in the form of code. In this section instead, we want to address code that has some value that your employees use for doing work. Code that you might think to rewrite given that you are already changing things.

Rewriting All the Code Is a Bad Idea™

Rewriting your whole codebase from scratch has the same risks as a legacy modernization project: it is risky, complicated, and not clear beforehand what you will gain from it. In addition to that, you have the additional costs and risks associated with creating something from zero.

The code represents an important part of the assets of a company: it embodies the way the company works. It is the sum of decades of experience. If you just throw away old code, you might be unable to fully recreate that value, because a lot of the time you do not remember why things works in a certain way.

Just think about this: whatever sector you work in, your actions are shaped by decades worth of regulations, practices, processes, and workarounds. There are many rules and standard practices born out of necessities of a time you cannot even remember. To try to re-analyze everything you do from scratch could be immensely beneficial, but it could also be just a giant investment. And the second possibility is much more likely. Combining that with a technological migration is looking for trouble.

Imagine your problem is that you are living in a cramped apartment because you cannot afford a large house. The solution? Just stop being poor!

Well, yes, that would work, but how do you stop being poor? You are expressing a desire for a solution but not devising an actual strategy to build a solution.

Manual rewrite is a bit like that. How do you rewrite the new software while avoiding the previous problems? The idea of rewriting everything is alluring because you can change everything! So you could solve all of your problems, in theory. The idea of a manual rewrite is appealing because it hides the complexity of reaching your goals.

When You Should Opt for a Manual Rewrite

How are you actually going to rewrite the new software while avoiding the previous problems? Technical leaders love the idea of getting things right. They say things like this:

I would create the software differently if I would make it today.

The statement is probably true because the more experience you gain in a project the better you could make it. But it is also most likely true they did not make any mistakes in the original design. They failed to anticipate future needs, or (wisely) they preferred to optimize for the present rather than an unknowable future.

If you did that for your original design what guarantees do you have that you are getting it perfectly right now?

Unless you can articulate a precise reason because the situation is now different, you are going to end up the same.

So a manual rewrite is only a good choice when you have a precise, deliberate idea of what you want to change in your company and how the new software fits into this change. In practice, it is only possible for software used for performing small or well-defined tasks. This does not mean that is never a good choice, but it is rare.

From a business perspective, you may ask yourself these questions:

- Is our business going to be the same in ten years?

- Will we work as we do now?

- And this is patently different from what happened when we created the software?

If you can confidently answer yes to all of these questions you should look into a manual rewrite because that would mean you have now all the information to build software that works for the foreseeable future.

Technical Context

Before proceeding with an overview of the migration process, let’s see more in detail the technical issues or pain points of AS/400. The reason is to understand the context in which we need to operate, the things we need to change and how.

Highly Compatible at the Bytecode Level

The AS/400 platform was designed to maintain compatibility without the need for recompiling even when changing processor architecture. This works similarly to how you can reuse a Java application (compiled to bytecode), on let’s say Windows and Mac, without compiling the code for each platform.

This was a great feature at the time and one of the reasons for his enduring success. The point of mentioning an obscure technical detail is that this means you can go decades without changing your old code. It still works but nobody understands exactly what it does.

EBCDIC Encoding

Extended Binary Coded Decimal Interchange Code (EBCDIC) is a unique encoding developed by IBM for its mainframe and midrange families. Different encodings mean different rules for representing characters and digits. So, if you try to read a text with the wrong encoding you do not get intelligible data. This is not a perfect analogy but encoding is a bit like a language that you use to store information.

Imagine that you have to translate a text from one language to another: for the most part, it is boring but clear what you have to do. That is except you have to translate stuff like poetry, which relies on technical features to convey meaning like the sounds of words to rhyme. Now you are in trouble because you cannot perfectly translate this text in another language.

A lot of old code relied on these technical features, not to rhyme data but to save memory and space. For example, you choose to represent a character as a number to save space, because a number can be represented by fewer bytes. This works using one encoding because characters are represented by sequential numbers, so maybe A is equal to 21, B is equal to 22, etc. However, this is not true for another encoding, so the code does not work anymore.

This encoding was already an issue when you had to deal with external sources since you had to translate encoding back and forth. When migrating code from one platform to another you might also have to modify the code itself to make it work.

Green Screens UI

We already mentioned that the platform uses a text-based interface, called 5250-based green screens. This is not very usable but this is not the main problem. These interfaces were designed basically to present forms. You list things like the name, length, and other details of a bunch of fields and let the AS/400 system handle where and how to show them to the users. This means that most applications that ask for input or present data to users are very much unusable by today’s standards. You have no autocomplete or the practice of smart selection of fields to present to the user (e.g., if different users need to use the same form, you cannot show some fields to a sales specialist and others to an inventory manager).

One thing is to present a long list of fields in a desktop application, another one is doing the same on a web or mobile application. For a series of practical reasons, like how the back button works or the size of a screen, it is not the same experience. So, you will have to re-design from scratch the user interface of applications, even if you could technically keep the basic code that lists the fields in the form.

It is Databases All the Way Down

The AS/400 comes with a database that is integrated with the operating system of the platform. We heard more than one expert jokingly say that the whole operating system is databases all the way down. This has negative consequences, for example, you need to recompile your applications when you update the database component which makes a sysadmin issue also a programming issue. This is because the definition of the database is made with code, so it is an integral part of the codebase and therefore the code must be recompiled.

Another practical concern is that the way you organize data is crucial to your application. So, nowadays different kinds of applications often use different databases. Given that the database is integrated with the operating system, it is not particularly good for any kind of application. This means that it does not store or handle access to data effectively.

The Migration Process

Every company might have unique challenges, but there are many common features. So we can identify common patterns and cases, in particular, we found that there are 4 typical items to address for a migration:

- batch and data handling tasks

- migrating data/database from DB2

- apps that provide business value and you want to keep

- apps that you want to drop or refactor for technical or business reasons

The first two items relate to data issues and the second ones to the applications.

Batch Jobs

Batch jobs are a significant portion of an AS400 application portfolio. They are business-critical, but low value. Examples are data transformation and transaction processing jobs such as ETL (Extract, transform, load), data sync, reporting and analysis, etc. These are tasks that are usually handled by system administrators and involve moving around and ensuring the availability of data.

These tasks are prime candidates for transition to cloud services. That is because current best practices involve using third-party services or software rather than handling them in-house. The reason is that these solutions are more professional and allow the option to unload the management of the software to other organizations.

Jobs based around file-based processing can be grouped under the category of data analytics and can be migrated to services designed to handle large amounts of data, like data warehouse services or software like Apache Hadoop.

For jobs involving real-time processing, like logs or queues, there are dedicated services that aggregate data from all kinds of sources, like Datadog, or software like Apache ActiveMQ.

Migrating Databases

The integrated database is called DB2 (technically IBM uses the name for a series of data storage technologies). Old AS/400 applications typically define the shape of the data in a series of files that use a format unique to IBM. This format is not compatible with the common SQL language that is used to handle data in a common relational database. This means that you have to convert this format definition into SQL before adopting another database. This might also require adapting the data itself to some extent.

For example, storing the name of a person in an old database might have been difficult because of encodings designed just for English-language words. So, maybe the name of a person should contain an accented character. However, the old database did not use an encoding able to support such characters, so you used apostrophes in place of accents. In such a case you would need to make some changes to the name to transform apostrophes into accents, where appropriate.

You want to migrate databases because there are many more options out there that are better suited for specific use cases. Even if you want to drop some obsolete applications to use a third-party service instead, you probably still need to migrate that data into the new service, so you need to convert the format anyway.

If you want to migrate a database for a standard business application the typical replacement is an Oracle database, which is a relational database with a similar enterprise approach, or PostgreSQL, which caters to the people looking for an open-source replacement of an Oracle database. Depending on your application, you might also want to migrate to a more flexible database like MongoDB, which is easier to use for developers.

Apps That Provide Business Value

You have apps that provide significant business value. These are most likely the apps that you are aware of (e.g., your inventory management system). You want to migrate these apps to a new platform.

Drop the Hardware, Keep Everything Else

It is easier to find support for standard AS/400 technologies such as RPG IV, COBOL, CL, and DB400. Instead older versions of RPG and code based on older systems, like System 32, might have to be heavily adapted.

So, software based on the latest AS/400 technologies can be rehosted largely as-is on the cloud in some cases (the marketing material talks about migrations done in days in some cases). Both Microsoft on Azure and Amazon on AWS offer support for this scenario, relying on third-party companies to help you with the migration. Notice that third-party companies provide the actual software to accomplish this, but the virtual machines are hosted on the cloud platforms.

This is the quickest alternative to get a working migration. It is also the one that leaves you with the highest maintenance cost. You save money and time in the migration but do not optimize savings, as a compromise it makes sense. It might also be a starting point for further changes.

Eliminate the Legacy Platform, Keep the Legacy Language

What if you use older technologies or want to have more control? For example, what if you want to keep using some legacy language or be completely free of legacy platforms?

In the first case, you can use open-source technologies, like JaRIKo, an RPG Interpreter in Kotlin to keep the RPG codebase but use a different coding platform, like the JVM. This is a good approach if you want to take back more control of the code and platform but are willing to adopt common practices and software.

It is not that you will be railroaded in using certain software: it is open-source software so you can do whatever you want. However, the path of least resistance will be the one most used by other companies.

Remove the Legacy

The third option is to use a custom transpiler to understand and transform RPG code (or COBOL) into whatever language you want.

This is the best approach if you want to start programming in another language or have other specific needs. For example, you want to fully move to Python, Java, or C#. The other options require you to keep using legacy languages. Instead with this one, you can fully transition to a new language.

This is usually the favorite option for developers but can also be a great option overall for the company. At the core, the value of a language comes from the community of its users. The users build both the tools to work with the language and the libraries available to everybody to use. It can work great because the community does a lot of the grunt work in your place. It leaves you doing just the work that allows you to provide the added value, so you can do that cheaper. This is opposed to the world of legacy languages, where you are forced to do all the work on your own.

The Python And Java Examples

Take Python, for instance, which has become a common language used in the enterprise. A few years ago that would have been a surprising choice for enterprise software. However, it is becoming more common because the language is easy to use and has great library support for analyzing data. So it is a language favored by scientists that need to work with a lot of data, and data analysts of all kinds. It is also the reason why Artificial Intelligence sector widely uses it. It brings together users coming from different worlds, like academia and enterprise, different sciences and engineering fields. This multiplies the value of the work done by everybody else. If you are interested specifically in moving from RPG to Python, we have accumulated enough experience in this task that we offer a dedicated service: Ready-to-go RPG to Python Migration.

Java is also a common language to migrate to and a traditional choice for the enterprise. This is largely for the same reason: a lot of the right people, namely other enterprise users, have adopted it. If you are interested in a custom transpiler, you can read more about our service: Transpilers.

Changing the UI

Whatever option you pick for the main code, there is a separate discussion to have about the UI. As we mentioned before, traditional AS/400 applications use green-screen UI, and these are not viable anymore. So there is the first reason to consider the UI a separate issue: it is an obvious issue to change.

The second reason is that it is relatively easy to change the UI separately from the rest of the code. Exactly because it is a separate concern, on most platforms, you can use a dedicated language or system to define the interface. You can keep the same business code and provide a different interface for different applications on different platforms. The code that defines the interface is usually on separate files on AS/400 applications, too.

In most cases, you actually do not want to have the same interface on different platforms, since they work better for different tasks. For example, a mobile device works for presenting information, while a PC works also works for editing data. So, you might make work a giant list of forms on a PC, but it is not usable on a mobile device.

However, you can start by simply transpiling the same original interface in another language as a first step. And then you slowly adapt the interface to the application and device.

In short, even if you want to pick the first option (using the same code but on the cloud rather than on a physical IBM machine), you may want to have a plan specifically to create a new, more accessible UI. An HTML (web) UI is typically the first choice because the code can work everywhere.

Apps That You Want to Significantly Refactor or Abandon

The typical users of AS/400 have developed a custom software stack, developing most of their code internally. This means that you have a lot of code that provides no business advantage and no real value. It is just code that you developed in the course of getting business value.

This is a broad category of software, that basically includes all code outside your area of expertise or interest. Of course, the exact nature of this code depends on your industry. However, typical examples are libraries or utility functions that do things like performing mathematical operations (e.g. calculus), handling holidays for the purpose of accounting, etc. Third-party software that is better than what you have can replace this code.

This case also includes software that is a mixture of old and not that valuable, so you do not want to make much effort for porting to a new platform and prefer to replace it with an alternative solution.

A Flexible Reality

There are two issues with this category: the valuable code is often intermingled with this kind of software and you want to reduce changes to business apps at a minimum during the migration. These two aspects obviously are in conflict.

You want your valuable software to get rid of the dependency on useless software but you also need to keep changes to a minimum. That is because it is hard to ensure that business code is working properly if you change multiple things at once. What if you have a bug? Is it due to the new platform or the refactoring? It is hard to tell. We are going to see how to deal with this problem later in the Testing Code section. For now, we want to continue with understanding the strategy.

The practical result is that you start the migration process with a list of apps that you surely want to keep and another one for the ones you want to drop. There is a third list of maybes.

The following section will help you whittle down this list by increasing your understanding of your existing codebase.

Understanding Your Codebase

To better evaluate your options for legacy modernization and how to proceed you need to understand your codebase. The stumbling block is that you probably do not know your codebase that well. You do not know what components make up each system. For instance, how exactly is organized the code for your inventory management? Which files belong to each component?

You probably do not know. There is no shame in admitting that. It is actually the reality at most companies. If you think about it, you probably cannot document how each work process is organized, you cannot explain every detail of how your marketing department works, and so on…

So, it is no wonder that the same is true for your codebase. Especially code in old languages that were not designed with project design and organization in mind. The good news is that newer languages and development tools usually help in organizing your code effectively. The problem is that you have to address this uncertainty to perform an effective migration before you can reach this new reality. You need to understand your code.

This in itself is a big topic, you can find entire books about it, like Your Code as a Crime Scene, here we just want to give you an overview of your possibilities.

Exploit Your Version Control System

If you are already using a version control system, you can take advantage of its logging features to perform a first, high-level analysis. For example, by looking at which files developers often edit together in a commit, you can reconstruct the broad structure of components.

There are tools to support using your version control to understand your code. One of these tools is Code Maat which supports git, Mercurial (hg), svn, Perforce (p4), and Team Foundation Server (tfs).

This is a good starting point since many analytical tools are language-specific, but this one just requires using a version control system.

Dependency Analysis

The next step would be to use static analysis tools to analyze your code. You want to understand how each part depends on others. This would allow you to group blocks of code such as functions, subroutines, and procedures into components and these components into systems.

There is open-source and commercially available software for that objective. A few years ago we reviewed NDepend, a popular commercial tool for .NET that would be great for this kind of architectural analysis. Unfortunately, there is probably nothing like that sort of tool for AS/400 and languages like RPG.

The second problem is that this process works for recent languages, but rarely for legacy ones. The reason is that old legacy languages did not provide any guidance on organizing code, it is mostly procedural code. Basically, architecturally speaking, the program is a giant list of top-level functions that can all call each other. So, even if you do perform dependency analysis, it often results in graphs with big, complicated networks that are hard to understand. The solution to this particular issue would be to use alternative visualizations, like a dependency matrix to find patterns more easily.

Get Your Own Tools

The solution to these two problems is to have your own tools to analyze code and detect patterns. However, you may lack the expertise or find it anti-economical to invest in creating such tools for one-time use. So, your best way might be to hire language engineering experts to create such tools for you.

With this approach, you can also check for custom tools that provide explicit guidance for your use case. For instance, you can identify the methods and files that compose some functionality that you want to drop to replace it with a cloud service. Then you can have a custom tool to warn you which other parts of the software you want to keep relies on these features.

When we build a transpiler for our clients, this is the sort of thing that we do as preliminary work, to better assess how difficult it would be to adapt the whole codebase to their new platform and help our clients choose how they want to proceed. You can read more about our approach in the article: Audit & Analysis for Language Migration Projects.

Testing Code

You now have a more precise list of the parts of your codebase that you want to keep and the ones you want to eventually drop. The key word is eventually because you might not be able to do it immediately during the migration process. At the moment you just know what you want to achieve. You reached this point based on business needs (e.g., what things we want to keep in-house) and practical costs (e.g., how much the code is intertwined). Now you need a method to effectively implement these changes. For that you need testing.

Previously, we mentioned that it is important to keep changes at a minimum because this increases the number of bugs and the uncertainty in migration. However, you also obviously want to limit the resources invested into maintaining code that you think is useless.

Testing is the solution. It is also often a problem with legacy code. The problem is that you often do not have tests in legacy systems since the code itself is not easy to test. The reason is that legacy languages themselves do not support good organization, so the code is often messy.

A Strategy for Testing the Migration Based on Seams

The fundamental problem is that you cannot add tests for all your legacy code. It would be a lot of work for little benefit. The role of testing is that of reducing risks and thus ensuring predictability in the cost of maintaining your codebase. That is crucial for your new codebase, but it is not worth the effort for a codebase that you already know you want to radically change.

So, you want to focus on testing specific parts that are relevant and will remain largely the same in the new codebase. These are the places where tests can be the most valuable and it makes sense to make a limited investment there. Now, it is easy to state such an objective in theory, but how can you identify such places in practice? Especially since you cannot predict how the code itself will change.

The practical answer is that you are going to identify the different components of your system and their interfaces. Interfaces are the places where different components interact with one another, so their natural tendency is to remain the same, even if the components change. For example, all the different parts of your software will ask the database component to store some data in the same way. Seams can identify these interfaces.

Break at the Seams

So the first step to address this is to break your code at the seams. Michael Feathers invented the term in Working Effectively with Legacy Code. A seam in software is a place where two parts of the software meet and where something else can be injected. An alternative way to say it: it is a place where you can change the behavior of the code without changing the code itself.

A very simple example is this: a function call is a seam because you can change what the called function does. You can change the code inside the function that is called, without changing the function call. So, the same function call will do something different. It is also the most common seam in legacy code. In more recent, object-oriented languages, a seam could be an object rather than one function call.

We are interested in identifying architecturally meaningful seams, so not all function calls will do. You want to identify seams that govern a specific component or larger functionality like a function call that retrieves data from a database or a series of function calls that implement something like tax calculations and transform that into a seam.

The seams are the points where you can separate your code. From the strategic point of view, you want to find seams that will allow you to safely drop the code you are not interested in. From a practical point of view, seams are the place where you can test your software. You want to use the seams to test what your code does before the migration, to ensure that after the migration the code will work the same.

Focus on Testing Results

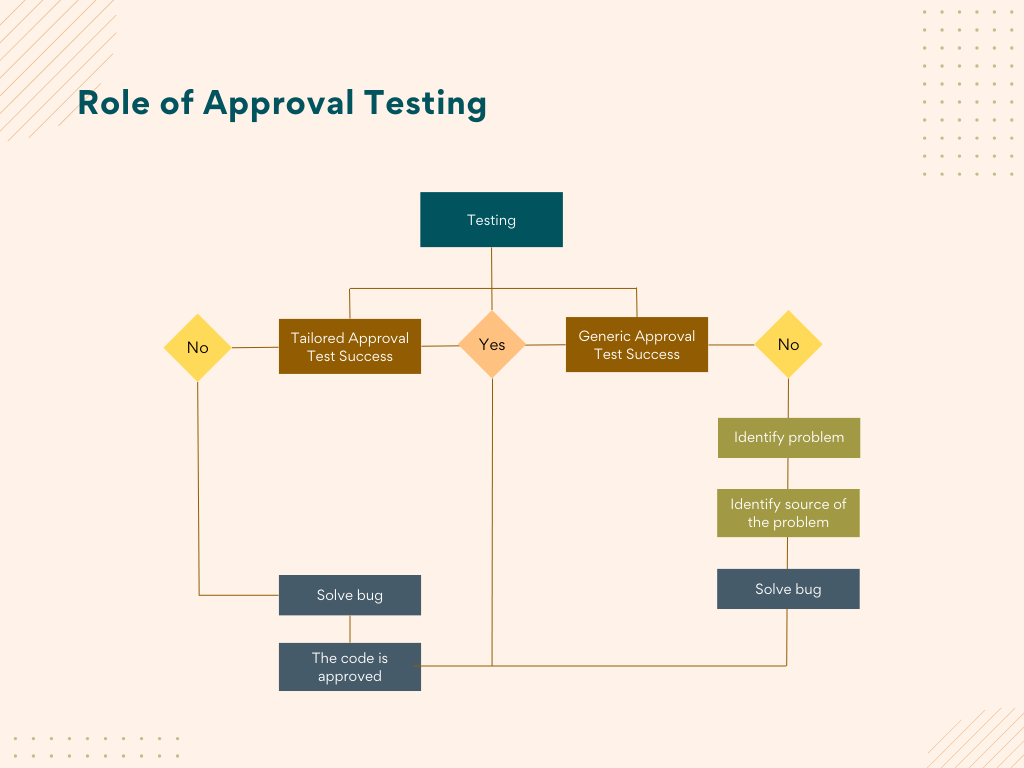

The second kind of testing you want to focus on has different names: characterization testing, approval tests, etc. The gist is that you want to record an input and a confirmed good result and then make sure that the result does not change after you change your code. Basically, you are testing that results do not change.

You do not want to make it fancy: you can save input and output in files and then read them back to make the test. You should think about approval testing as if you were a user of the software, you provide the same input and want the same output.

Approval tests are the answer to the question: does the software behave as it should?

An important consideration is that you want to use approval testing in a slightly different way during the migration process and after the development has firmly moved onto the new platform.

Select Tests Appropriately for Each Phase

You want to select a set of meaningful and carefully selected examples for the approval tests that will be part of the regular development cycle. These tests should verify that important common cases are handled correctly and some nasty bugs that hit you in the past are solved.

For your approval testing specifically during the migration process instead, it makes sense to use a firehose approach. If you are confident that your current legacy code is producing the right results, just save all the input and output you can and use it for approval testing. Does your software handle transactions? Save all the transactions you make for a month. You can then use all this data for your approval testing.

When moving the code from the development branch to production you want something in between the two previous approaches. The code would have already passed standard approval testing for development, so you just need to run some more approval testing of random real-life data to catch a few more errors. By random real-life data, we mean both literally random real-life data and tests that have no specific technical reason but might have a business one.

For example, if you have a client that is particularly important to you, you might decide that it makes sense to check that a new production version of your code does not cause problems for this particular client. You can do that by checking all their recent data or inputs.

Why to Choose a Different Approach

| Phase | Approach | Reasoning |

|---|---|---|

| Migration testing | Firehose-approach: record all output before the migration and check that it works after the migration | Your main concern is to catch mistakes introduced by the migration |

| Standard development testing | Select meaningful tests of previous bugs and common issues | Tests should aid development and not impede. They must be few and help developers identify where is the mistake |

| Moving development code into production | Select meaningful tests and a few random ones | You want to catch the errors before your users |

The reasoning behind these discrepancies is that testing everything takes a lot of time and so does understanding the results of testing. If testing takes hours, developers cannot make it a part of their regular development workflow, because they would not be able to work.

They also need to have failing tests that are intelligible. If you have an approval test designed to check invoices for European clients and that fails, then the developer knows that they need to check for code that handles European stuff. If you have an approval test for a random invoice, if that fails you might have caught an error, but the developer will need more time to understand what exactly did go wrong. So, it is inefficient to have a lot of such tests at development time.

Your team should have squashed most bugs at the time of migration or move into production. So you can test everything that is feasible to test because you want to catch errors before production.

Summary

We have reached the end of this article but you are still just at the beginning of your legacy modernization journey. You have learned:

- the big issues in legacy modernization for AS/400

- how the overall migration process works

- an introduction to performing the various steps in front of you

To explore more practical options you can go deeper into the Amazon AWS ecosystem with the article on their blog: Demystifying Legacy Migration Options to the AWS Cloud. We are sure that Microsoft Azure does provide similar options, however, we found that only AWS provides them clearly to the general public. You need to go to a salesman if you want the same information from Microsoft.

How to Improve Legacy Code

In order to keep the article manageable we did not talk about another challenge: even if you migrate the code to a new platform you still have legacy code to improve. If you need more help with the practical issues of working with legacy code we can suggest the website: https://understandlegacycode.com/. It talks about the problems and the solutions to effectively work with legacy code. It is going to be a useful resource for developers and software architects.

Instead, software architects and technical leaders in general could benefit from these books:

- Michael Feathers, Working Effectively with Legacy Code

- Adam Tornhill, Software Design X-Rays

The first one can be considered the bible of dealing with legacy code, it is the book most often referred to by many people working in the field:

This book provides programmers with the ability to cost effectively handle common legacy code problems without having to go through the hugely expensive task of rewriting all existing code. It describes a series of practical strategies that developers can employ to bring their existing software applications under control.

The second is a practical guide to understanding and fighting against the often nebulous term technical debt:

Are you working on a codebase where cost overruns, death marches, and heroic fights with legacy code monsters are the norm? Battle these adversaries with novel ways to identify and prioritize technical debt, based on behavioral data from how developers work with code.